Jarvis

Jarvis is my voice-controlled software I started creating in 2012. This page lays out its details and history. You can see rapid development between 2012 and 2013.

Motivation

The original idea was to create a human-like program that will be a genuine friend. That was a very lofty ambition, and although I couldn’t achieve it, I managed to create a computer program that I can speak to, and it to me.

Incarnations

Pre-Jarvis

chem

April 2012

I needed some way to look things up using natural language, and I decided that the periodic table was a good place to start. So, chem was intended to be a periodic table that could be looked up using natural language queries. Unfortunately, it looks like it was abandoned before it was completed in favor of DARS.

DARS

April-June 2012

The Database Access and Retrieval System (DARS) can perform natural language lookups on any SQLite database, which was a general solution to the problem that chem was trying to solve. The video below shows a demo using a periodic table database.

Of course, it’s long abandoned, but it still works. The name is obviously inspired by Star Trek’s Library Computer Access and Retrieval System (LCARS). I tried to include a basic personality, which basically meant that it responded to greetings and thanks.

DARS built a mental map from the SQLite3 databases that were attached and answer questions about them.

DARS was the first functional piece of software that allowed me to query data using natural-ish language. I first created it after realizing that chem was too specialized and that databases would be an easier and more general solution to the problem of looking up a knowledge base. I wrote it in Objective-C, and used a new periodic table SQLite database. Since DARS is flexible, any SQLite database can be queried as long as there was a DARS adaptor to tell DARS the natural language names of the various columns.

Computer Command/Satellite

July-August 2012

The pair of Computer Command and Computer Satellite was my first voice-controlled system, and a direct precursor to Jarvis 1.

Computer Satellite recognized text, which was then sent to Computer Command, where the action specified was carried out. It could do things like open apps, change the system volume, etc. However, it was implemented as a series of primitive equals, prefix and suffix checks, and everytime the recognized text deviates from those (which happened very often), the app will just fail.

In addition, having to perform speech recognition on the slow devices back then was painfully slow, and required me to unlock my iOS device and launch the app before I could talk to my computer.

Computer Command/Satellite was awesome but the way it was structured and built wasn’t ideal.

Jarvis 1

2012-2013

Jarvis 1 was much bigger than Computer Command, with lots more tricks. It even had a cute self-destruct routine. Jarvis 1 also used Pocketsphinx to perform speech recognition on my mid-2010 MacBook Pro.

The biggest change, however, was how it handled tasks. Computer Command’s huge 180-line -processInput: method was broken up into small action handlers that conformed to a shared protocol. This way, I could break down the method into small classes spread out over a few files, and concentrate on figuring out a better way of handling input text.

I’ve learnt a lot about language models and the algorithms behind them, HMMs, discovered the PageRank and TextRank algorithms, explored the Objective-C private APIs in both OS X and iOS, among many other things, and it gave me some real world experience with SQLite databases (which I normally interact with indirectly using Core Data) and the speed indexes provide.

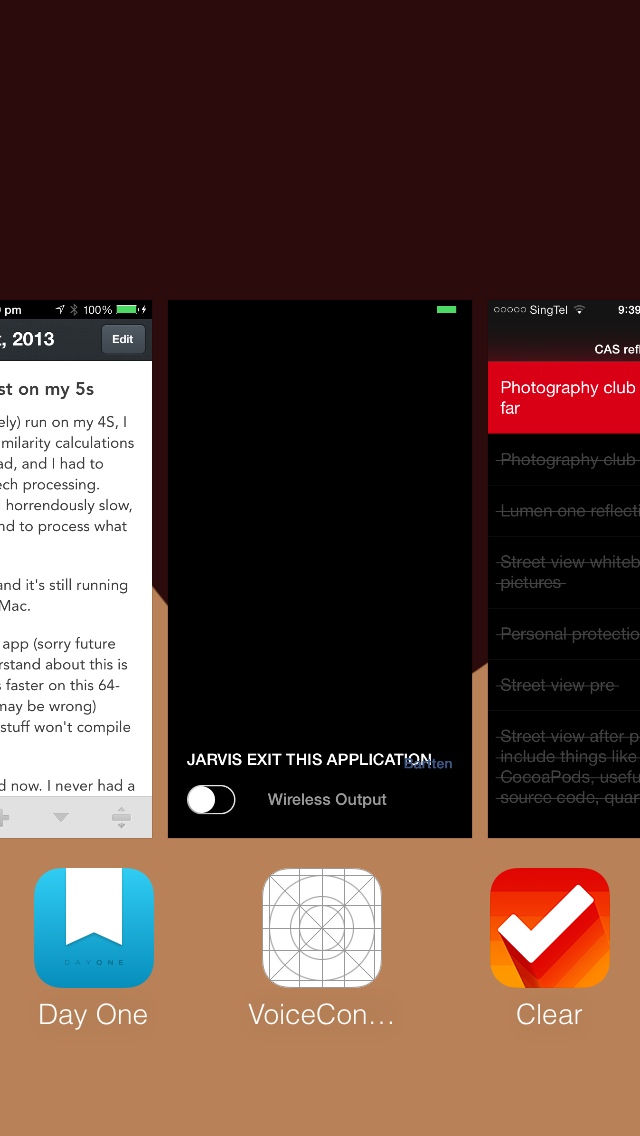

There was also a companion app that was basically a microphone, streaming its superior mic audio (beamforming mics! Noise cancelling!) to the Mac when needed. Using private APIs, I could also launch and quit apps on my iPad and iPhone using Jarvis. Pretty cool.

Jarvis 2

2013

Several things persuaded me to create Jarvis 2:

- Jarvis 1 was getting too big and unwieldy

- I had also been reading up on the UNIX architecture, which emphasized the benefits of a bunch of small programs linked together instead of having one large one.

- In addition, I had found that there were so many programming paradigms, and it was all so fascinating. However, as some languages are so different (e.g. Prolog, Haskell and C), it wasn’t easy to make them work together. I also realized that different tasks are easier in different languages, and some languages even make solutions to problems obvious.

This formed the architecture for Jarvis 2.

Jarvis 2 was an experiment to see if a bunch of separate scripts could be make to work together. Each script was essentially a data handler of Jarvis 1 reimplemented to use this decentralized architecture. So, in order to allow me to write scripts and programs on devices and in the language that suited the task best, Jarvis 2 used TCP sockets to transfer JSON data.

The development speed benefits are also pretty clear. I can tweak one of the handlers, save it, and Jarvis is already using the new file; I didn’t have to recompile a behemoth program.

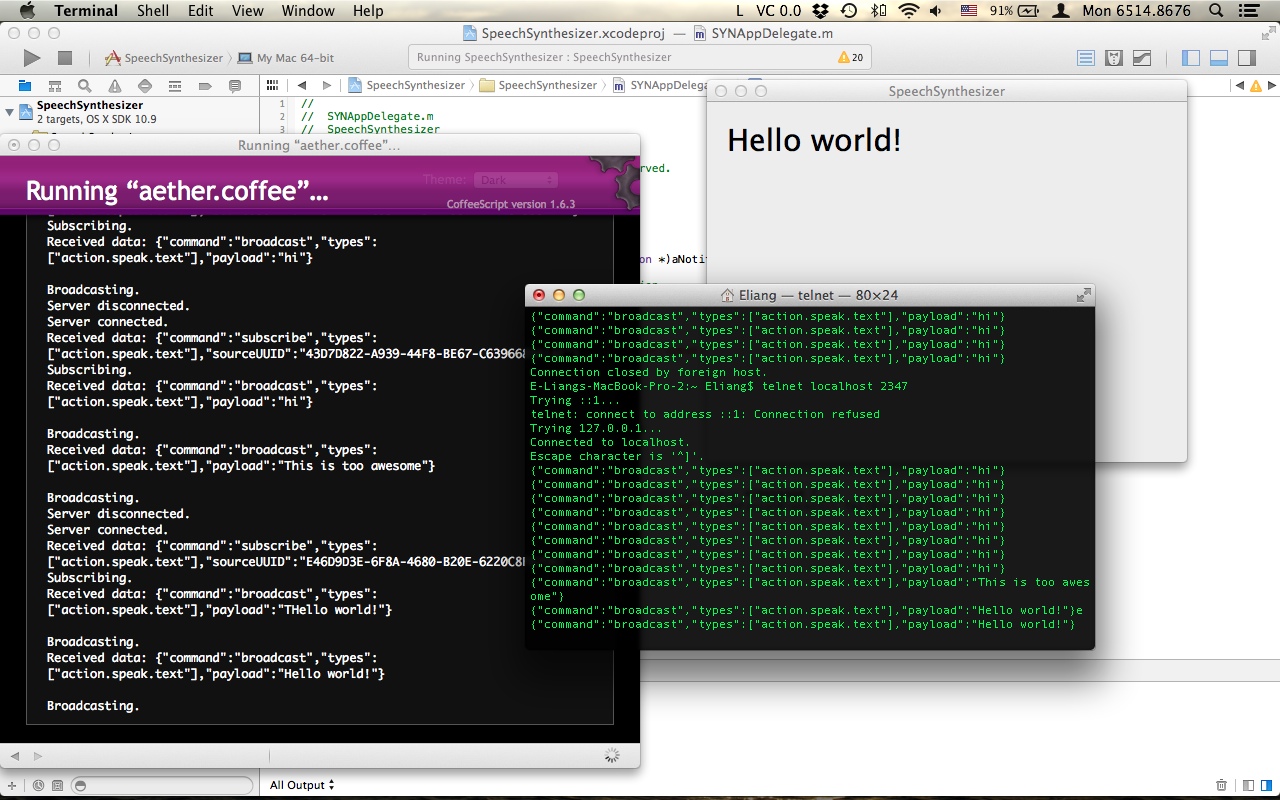

The screenshot below taken in Nov 2013 shows me sending a command into the Aether, which is picked up by an early SpeechSynthesizer app.

It was a brilliant design, but unfortunately TCP sockets weren’t robust enough for my uses, and sometimes messages would be broken up and the system won’t work.

Jarvis 2.5

2014

Due to the problems with TCP, I changed the IPC mechanism drastically and used plaintext files instead. The core Jarvis 2.5 scripts use the UNIX tail command to watch a few files for changes, and act on them accordingly.

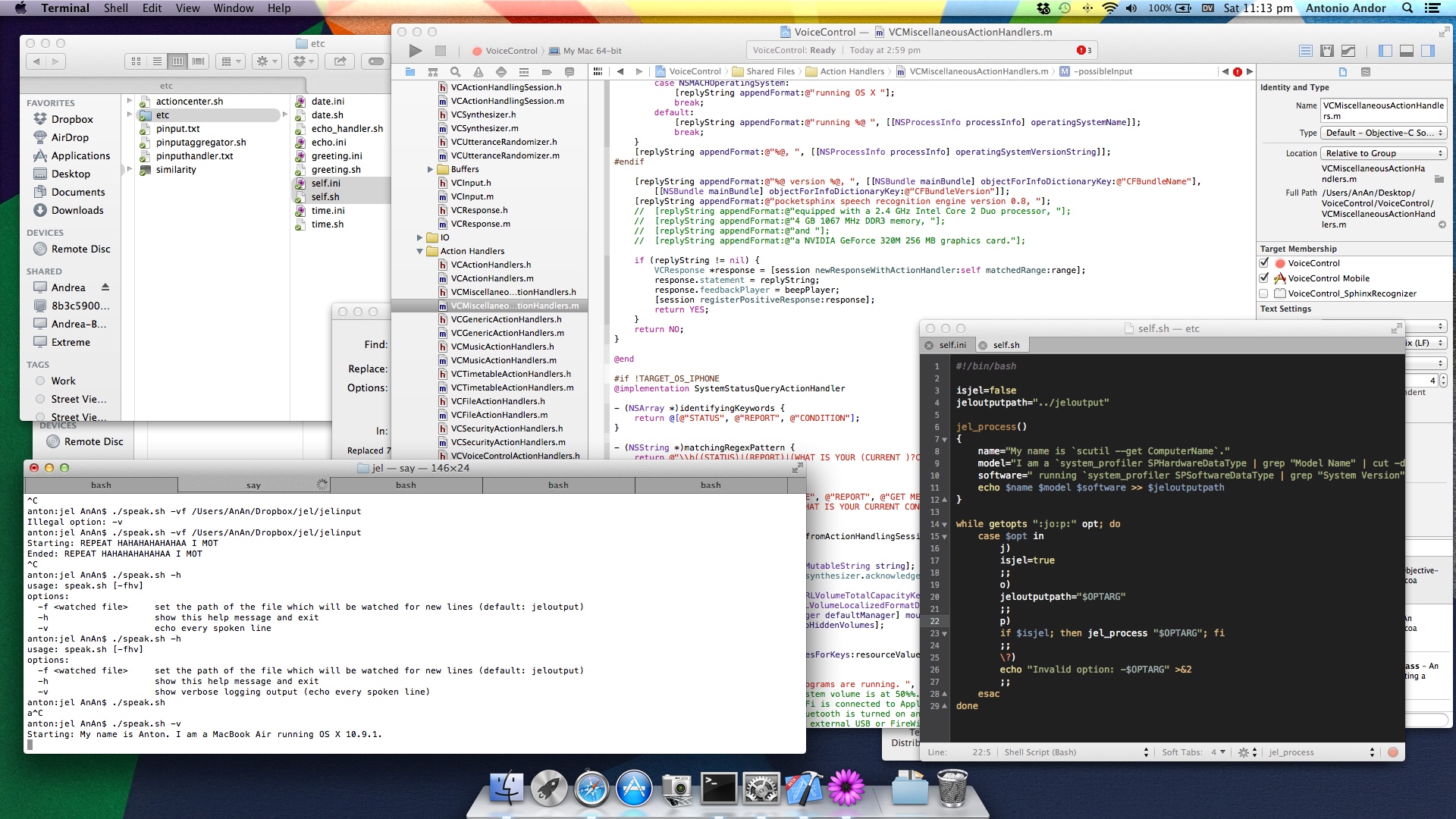

I ported a few simple action handlers over to this new architecture. Each action handler is specified by two files: an INI configuration file, and the handler which can be of any executable file type. This is the flexible part. Right now all the handlers I’ve implemented are bash scripts, but there’s nothing stopping me from making Python, Java, C, Vala, etc. programs instead.

The second screenshot below taken in Feb 2014 shows a Bash script speaking input it received in the jeloutput file.

Jarvis 3

2014-2016

Following the success of Jarvis 2, I tried to make Jarvis understand things, instead of simply perform actions. In order to do that, I had to set up a knowledge base. I introduced Forms and Functions, which are essentially potentially abstract things and actions that can be performed on them respectively.

While Jarvis 2 introduced the notion of language independence, Jarvis 3 introduces the concept of device and program independence. With everything simply a Form and Function, different devices and programs can define Functions for the same Form. The beauty is that Jarvis 3 abstracts away the platform and device, and you can interact with one system that spans across everything you use.

I can have scripts running on a Raspberry Pi controlling my lights, an Android phone receiving and reading out SMSes, and a Mac and iPad launching and quitting applications. Every task is done by the device that does it best.

Super elegant design, if I may say so myself.

Unfortunately the implementation is a little lagging. It’s been 2 years but I only have a set of Raspberry Pi scripts built on Jarvis 3 controlling my lights (my Spiderweb project).

Todo

Jarvis 3 is barely getting off the ground. I’ll need to implement more things in Jarvis 3 so that it’s more useful, such as the speech recognition and synthesis that have been in all previous versions of Jarvis.

Jarvis has immense potential and I’m very proud of it. If anyone would like to work with me on it or point me to any interesting resources, just hit me up at @taneliang or email me!